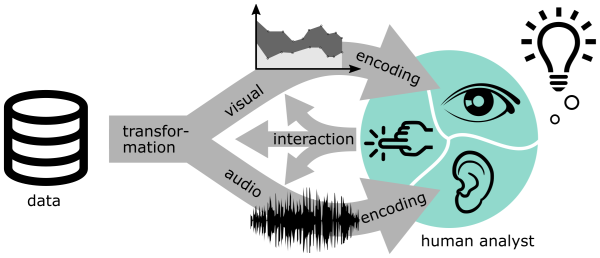

Visualization and sonification are two approaches for conveying data to humans based on complementary high-bandwidth information processing systems. Kramer et al. defined sonification as “the use of nonspeech audio to convey information.” Tamara Munzner defines visualization as follows: “Computer-based visualization systems provide visual representations of datasets designed to help people carry out tasks more effectively.” Both visualization and sonification address the purpose of involving human analysts in data analysis. There are several similarities between the methods and design theories of both approaches, such as the use of perceptual variables to encode data attributes, and the role of interaction in manipulating the data representations.

Some illustrative examples:

Over the recent decades, both fields have established research communities, theoretical frameworks, and toolkit support. Although extensive research has been carried out both on the auditory andvisual representation of data, comparatively little is known about their systematic and complementary combination for data analysis. One example of multimodal research is Keith Nesbitt’s dissertation. Also, Walker and Kramer pointed out that research on the design and the use of multimodal sonification is important to drive sonification and visualization research forward. There are potential powerful synergies in combining both modalities to address the individual limitations of each one. Nevertheless, existing research on combinations has often focused only on one of the modalities.

Actual multimodal approaches should be based on complementary and mutually supportive interplays between data representations on the visual and the auditory domain. This workshop series aims to build a community of researchers from both fields, to work towards a common language, and to find research gaps for a combined visualization and sonification theory.

Upcoming events:

- to be announced

Past events:

- Dagstuhl seminar (Schloss Dagstuhl, Germany): Feb 9-14, 2025

- STAR and Panel @ EuroVis 2024 (Odense, Denmark): May 29-30, 2024

- Panel Discussion @ ICAD 2023 (Norrköping, Sweden): June 29, 2023

- AVAC Online Meet-Ups

- Application Spotlight @ IEEE VIS (Oklahoma City, USA/hybrid): October 20, 2022

- 3rd Workshop on Audio-Visual Analytics @ Int. Conf. Advanced Visual Interfaces: June 7, 2022

- Workshop @ IEEE VIS (virtual): October 25, 2021

- Workshop @ Audio Mostly (virtual): September 3, 2021